Recently, I’ve found a very interesting and substantive interlocutor; I could discuss any topic with it, get information and even get assistance in writing some code. And yes, I should admit: no wonder “Her” movie becomes a reality for some geeks quite soon.

Also, there are a bunch of YouTube videos that appeared overnight about “How to become a millionaire with ChatGPT” or “How to outsmart stock markets.”

So, what is all the hype about? Let’s dive in.

Table of Contents

- What Is ChatGPT?

- Why Did ChatGPT Attract So Much Attention?

- ChatGPT Use Cases

- GPT-3 and ChatGPT Limitations and Restraints

- Final Thoughts

What Is ChatGPT?

In simple words, ChatGPT is a very advanced chatbot that can give extensive answers to even complicated questions, write code snippets for you, correct grammar and punctuation, etc. So, it performs different tasks based on generating text.

The recent excitement in the WordPress community was about the fact that it can write a plugin. Well, what is essential here is to write the right and clear request.

A few words about AI models

Now, let’s look a bit under the hood. First of all, ChatGPT is a large language model (LLM) that can read, process, and generate human-like text as a response to prompts. LLMs, in simple words, are AI tools for understanding and predicting languages. And for doing it, it’s modeling how humans speak, learning all the patterns and variations, and coming up with its own idea about doing it.

We, humans, are also trained LLM models in a way, with the neural network right inside our heads, and perfectly understand nuances of what others actually mean in a split second. But only for a language we know really well, yet when it comes to the one we are not so fluent in, we either don’t understand a lot of nuances or use the approach from our native language, which is not always applicable. So, as usual, artificial intelligence is trying to be more human-like and sound natural, and understand what we really mean, ask, and need.

Several years ago, Google introduced BERT – the model that made search results look different, suggesting what humans need, not what they prompted.

For example, if before BERT, the search query was “Can you get medicine for someone pharmacy,” the results would be about filling prescriptions – because concentrated on the words “pharmacy” and “medicine.” But with BERT, the engine understood that “for someone” plays a key role here and started to show articles about whether other people are eligible to get medications for their family and friends. And there are so many cases like this.

So, the developers of ChatGPT did a great job and trained the model on a dataset on conversational text, scraping it from all kinds of Internet text, including articles, books, and social networks, also using human-supervised fine-tuning when people were giving feedback, and it is based on a GPT-3.5 model series, the most advanced LLM series to this day, and consists of three “davinci” models for code and text.

It uses a transformer-based neural network architecture and has 175 billion parameters, making it one of the largest models of its kind.

The training finished in early 2022 on Azure AI supercomputing infrastructure.

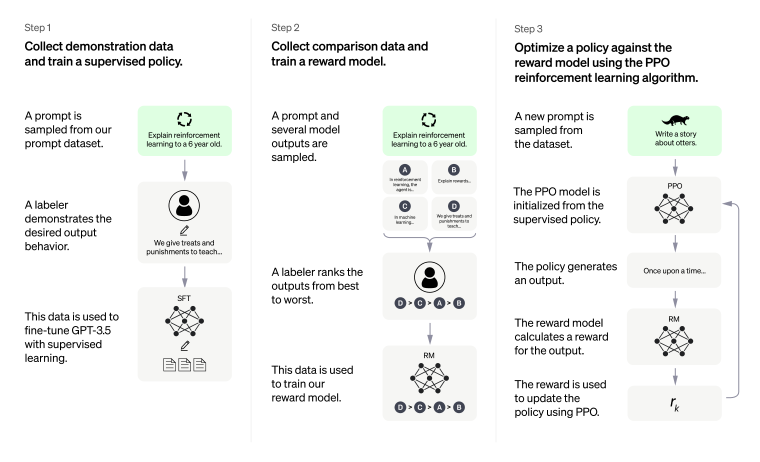

Model development

In a nutshell, AI models are sets of algorithms, and the training process has several major steps:

- Collecting and preparing the data: it should be first cleaned and preprocessed, so the model can use it.

- Designing the model architecture.

- Training the model using the process called backpropagation, in which the model’s parameters are adjusted to minimize the error on the training data.

- Testing and evaluating the model on a separate dataset to see its performance.

- Fine-tuning and optimization.

OpenAI, the company behind ChatGPT

OpenAI was founded in 2015 as a non-profit organization, and Elon Mask was involved along with Sam Altman. The company’s initial goal was to give a framework for developing artificial intelligence the way it can profit humanity.

Now, there is a non-profit, OpenAI Inc., and the daughter company, for-profit OpenAI LP. The latter received a 1-billion-dollar investment in 2019, and this year, they are thinking about giving another $10 billion. Also, Microsoft has exclusive rights to the source code.

- In 2017, they released GPT (Generative Pre-trained Transformer), the language model trained on a large dataset of texts from the Internet.

- In 2018, the second generation, GPT-2, was released, with 1.5 billion parameters able to generate fluent text.

- And finally, in 2020, OpenAI introduced GPT-3 with 175 billion parameters, which is a cut above the previous GPT-2 and is able to perform tasks it was not trained for.

GPT-3 can be used by developers and integrated into different projects with the help of OpenAI API.

Competitors and alternatives

Developing AI models is a very costly process, so only Big Tech can afford it.

So, most of the specialists named three leading players: OpenAI, Deep Mind (Alphabet), and FAIR (Facebook). But there is also IBM Research AI, and we should not forget about Google Brain, Chinese Baidu Research AI, and Tencent AI Lab.

Why Did ChatGPT Attract So Much Attention?

Over the first week, ChatGPT got over 1 million users, so it had to close registration. So, well, I’m not an analyst of Big Data to give the scientifically correct answer to this question, but the history of the most successful tech projects teaches us that when many factors match, the product can become very successful and get all the hype. I also love the idea of Bill Gross, the founder of Idealab, that timing determines why something becomes successful.

Other than that, there are a few facts that explain why we all talk about ChatGPT those days:

- it’s really powerful and one of the largest LLMs in the world;

- it’s easily accessible;

- it had good media coverage;

- this chatbot can actually do some job for you: explain or write code, translate and answer all kinds of questions (answers to some of them you can’t get from Google so easily);

- it gives you a feeling that you are talking to a human, so it feels very natural and engaging.

ChatGPT Use Cases

I will start with the most simple task when I’ve asked it to write me some HTML/CSS. And it can do JavaScript for you, too.

I guess, ChatGPT would not be satisfied by this, so I let it talk and brag about the best projects it has been involved in:

- Microsoft has integrated ChatGPT into several of its products and services, such as its Azure Cognitive Services platform, which allows developers to easily add natural language processing capabilities to their applications.

- Google uses ChatGPT and other large language models for various natural language processing tasks, such as text generation, summarization, and answering questions.

- IBM has integrated ChatGPT into its Watson Assistant platform, which allows developers to create chatbots and other conversational applications.

- Salesforce has integrated ChatGPT into its Einstein AI platform, which allows developers to add natural language processing capabilities to their Salesforce applications.

Let’s Chat with ChatGPT

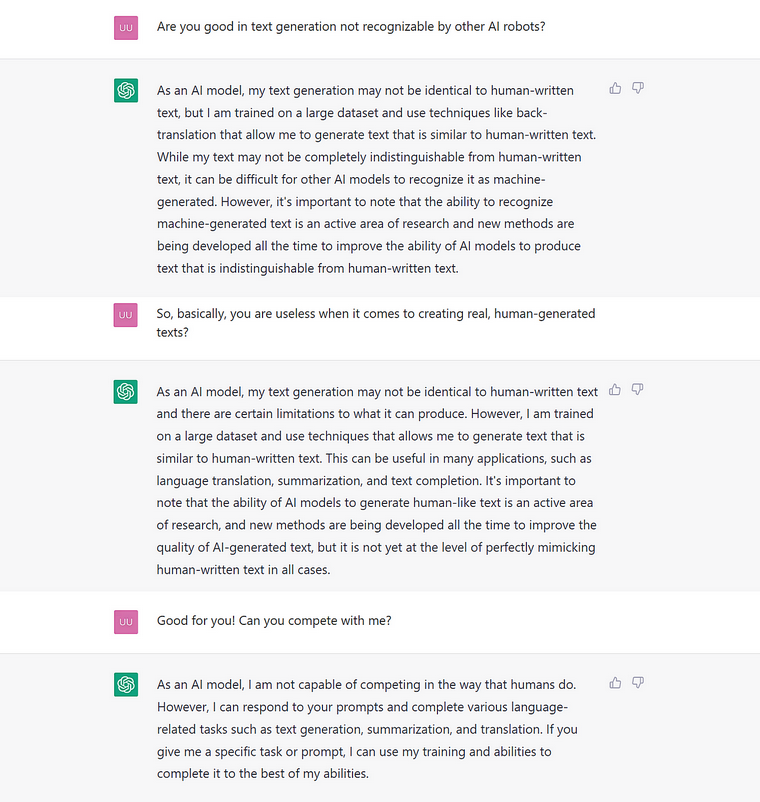

The AI is powerful, so I couldn’t stand it, but I asked it for some advice and serious questions humans and robots can finally discuss:

Our future in the industry

AI creative writing

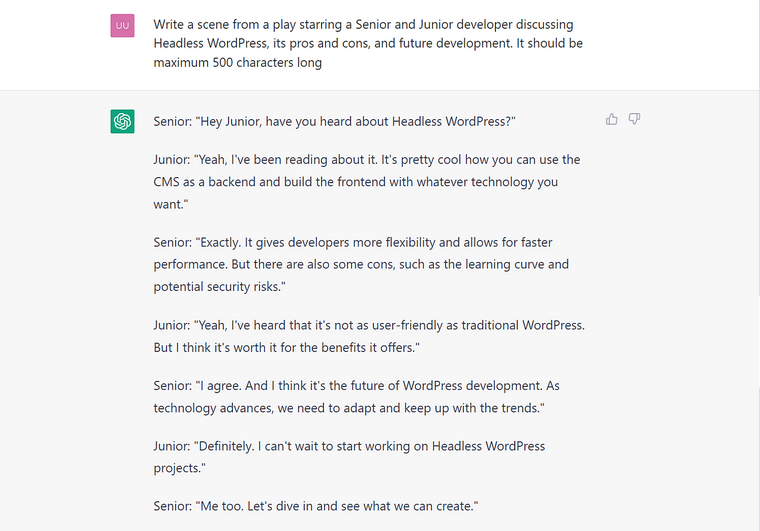

I’ve got inspired by Marc Andreessen’s tweet and asked ChatGPT to create a short play where two developers talk about WordPress.

I actually tried many WordPress-related topics, and the result was pretty boring. But, if you don’t limit the bot by the max characters, it will write a description of the room (as a rule, developers will be in a cluttered office or large open space), giving them names and describing their movements. But if the scene should be short, it doesn’t waste the character limit on all those frills. This is what I’ve got:

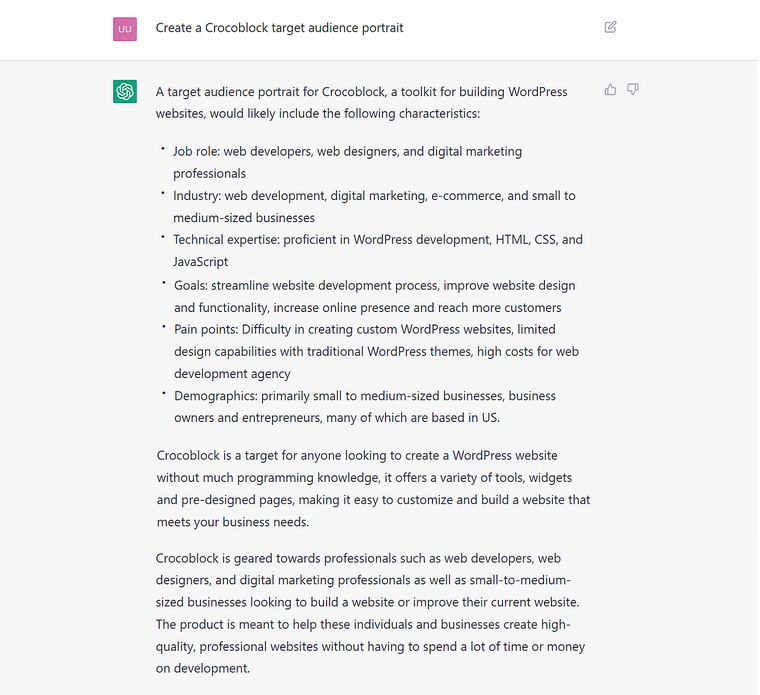

What does ChatGPT think about Crocoblock’s clients?

For sure, it’s impossible to resist asking AI about the meaning of life and other deep questions. But mine was more pragmatic, and I was curious whether it could replace some marketers and talk a bit about Crocoblock’s clients:

GPT-3 and ChatGPT Limitations and Restraints

Of course, after loud headlines about ChatGPT being a Google killer, there’s a fair question about this chatbot’s limitations, flaws, and potential dangers.

First of all, can it “kill” Google?

I absolutely don’t think so. It doesn’t have information about current events, and because it was trained in 2021, its knowledge about the world is pretty outdated. Also, it doesn’t give you the sources of information – so you can’t verify whether they are trustworthy, which brings me to the next limitation.

It’s biased and based on probabilities.

Even the main page of the chat states this. So, it generates text word-by-word, where the next word (“token”) is just the most probable. And what will be considered the most probable is based on the data on which it was trained. But this text data is a weird mix of basically everything. That’s why you can find discrimination and other biased behavior that can be harmful to some groups of people. Well, yes, you can rate the answer, and it will help to fix the issue in the future.

It can tell you nonsense with confidence.

It can mix up facts and fiction and give you quite well-composed answers, which sound pretty reliable, but they are absolutely not. Again, it doesn’t show you the source of the initial information, so you can’t check it out and evaluate whether it can be trustworthy.

Starting from the wrong code, where many things are not taken into account, to the dangerous medical advice, recipes, or general statements and facts that can lead people to wrong conclusions, political views, and social attitudes.

So, common sense is something extremely important when you try to use AI for complex tasks and topics.

Considering all of this, it’s not surprising that Google didn’t release its work in this area yet, even if its “Sparrow” already exists. And it’s not the only one; we don’t know a lot about what’s happening behind the closed doors of Big Tech research labs.

Will all the website content will be AI-generated now?

Unlikely, and in addition to the reasons I’ve mentioned above, such texts can be easily detected.

Recently, the news broke out that ChatGPT-generated answers are banned from Stack Overflow because it’s “substantially harmful,” as people with little to no expertise started to post many answers which were often incorrect; also, they don’t take into account a lot of contexts.

Final Thoughts

I love ChatGPT – it’s extremely useful for many tasks and truly engaging. But talking about ChatGPT and other AI’s claims that they have an idea of what commonsense knowledge is – I have some doubts because not every human has a clear idea about what it is.

That’s why, in my humble opinion, we can really enjoy “talking” to AI, and ChatGPT is one of the most exciting players in this field for now. At the same time, considering all the complexities, I guess that common sense is the most important player and assistant to all of us that can lead the way, giving us the idea of what to believe or not and what to use or not. And let AI help us!